Early Language Development in Robots

Elio Tuci, Tomassino Ferrauto and Stefano Nolfi

Abstract

In this document we will illustrate how a an humanoid robot can be trained to acquire linguistic abilities analogous to those that are acquired during the very initial phase of language development by children. We will also see how, in certain conditions, robots rewarded for the ability to comprehend a set of sentences show a generalization abilities that allow them to comprehend new, never experienced sentences, by producing new, never produced behaviors.

1. Introduction

There are three important aspects that characterize early language development in children that we like to stress. One first aspect is constituted by the fact that children first develop an ability to comprehend language and only later an ability to also produce language. Thus comprehension precedes production, frequently by rather large gap. Indeed, children begin to show evidences of holo-phrases/word comprehension around 9 months of age and starts to produce they first words only later. By 18 months typically comprehend about 50 words while produce only about 10 words. From a developmental robotics perspective this means that we want to train our robot to comprehend language before training it to produce language, since the acquisition of an ability to comprehend holo-phrases and words might represent a prerequisite for the ability to produce words and phrases with a communicative intention.

A second important aspect concerns the way in which language is used in this early phase. The most frequent usage of language during such early phases involve the caretaker producing a sentence that aims to trigger in the child an action achieving a given goal (such as for example the sentence "wash your hand") followed by a reward signal (such as "very good") when the child produce an action achieving the intended speaker goal. Thus the development of an ability to comprehend language, in this phase, implies developing an ability to react to a sentence in a given context by triggering an action that produces the goal intended by the caretaker. Another related way in which language is used during this very early phase involve the caretaker producing an sentence that describe the action that the child spontaneously triggered. This usage indeed can facilitate the acquisition of an ability to comprehend language in the sense described above. The predominance of this type of language usage in early phases is reflected by the fact that the large majority of words included in the first set of 10 comprehended words is constituted by action words, that is words that elicit specific action in the child or that describe the actions produced by the child. From a developmental robotics perspective this means that an appropriate way to model such process is that to train the robot to sentences by producing actions that achieve, in varying contexts, the goals conveyed by the sentence.

The third aspect to consider is that language development does not occur in isolation but is rather accompanied by the concurrent development of other important capacities, most notably the development of action capacities. Indeed the expansion of repertoire of comprehended sentences/words is often accompanied by a corresponding expansion of action skills. The strict interdependence between language and action development is due to at least two reasons. On one side, the acquisition of a new action skill might represent a prerequisite for the ability to comprehend a new sentence (i.e. for the ability to respond appropriately to that sentence). On the other hand, the perception of a linguistic stimulus in the right context and in the right moment, can create the conditions for the development of the corresponding action skill that is appropriate for that context. The co-development of linguistic and actions skills thus constitutes a tightly integrated process. From a developmental robotics perspective this implies that robots should be placed in the condition to progressively and concurrently expand and improve both their linguistic and language skills and should be provided with an integrated control system in which modification affecting action production influence language comprehension and vice versa.

To investigate these issues we set up a series of experiments in which an iCub humanoid robot is trained to comprehend simple sentences (constituted by the combination of three action words: indicate, touch, and move and three object word, red, green and blue object) by performing actions that achieve the corresponding goals (Tuci et al., 2011). In other words the robot is trained to comprehend the meaning of a set of simple sentences, to develop the ability to display context dependent behavior achieving the goal coveyed by the sentences, and to react to each sentence by triggering the appropriate behavior.

Video 1. The behavior displayed by a robot in simulation at the end of the adaptive process during a series of trials in which the robot respond to different imperative sentences.

2. Method

The robots is provided with a neural controller that receives as input information from the tactile sensors placed on the robot right-hand, visual information extracted from the camera, and pre-elaborate linguistic information encoding the action word and the object word included in the current sentence, elaborate all this information within an internal layer of recurrent neurons, and control the joints of the right arm through four motor neurons that control the 2DoFs of a two-segments arm (additional experiments involving a more complex robot morphology are reported below)

The connection weights and the time constant of the neurons are encoded as free parameters and trained, through a genetic algorithm, for the ability to produce the outcome indicated by the corresponding sentence. This means that the parameters are varied randomly and variations are retained or discarded on the basis of how well the robot for achieve the goal conveyed by the current perceived sentence, that is, how well it aligning the forearm toward the appropriate object in the case of indicate object sentences, touch the object with the hand in the case of touch object sentence, touch and move the object in the case of move object sentences.

To verify whether the robot is able to generalize the knowledge acquired during the training process in new circumstances we trained the robot only on 7 out of the nine possible sentences that can be generated by combining the three object and action words. So during the training the robot never experience the sentence move the blue object and touch the green object and is never rewarded for producing the corresponding behavior.

Moreover to verify whether compositionality in comprehension and in behavior generation is affected by the composition of the behavioral set, we replicated the experiments in a with-ignore condition in which the word indicate has been replaced with the word ignore object (which means to orient the forearm in a different direction with respect to the appropriate object).

At the end of the training process we can see how the robot successfully comprehend the 7 sentences on which they have been trained by producing the appropriate corresponding behaviour. Robots neural controllers have been trained in simulation and then successfully ported on a simulated iCub. On the real robot the movement of the arm joints are controlled, on the basis of the output of the neural network, through an inverse kinematic module developed by Pataccini et al. (2010). See Tuci et al. (2011) for more details.

3. Results

By post-evaluating the robots at the end of the training process we observed that some of those trained in the WITH-INDICATE condition also display an ability to comprehend the two new sentences by displaying the corresponding appropriate behaviors. This despite they never experienced these sentences during the training process and were never rewarded for correctly comprehending these sentences and for producing the corresponding behaviors during the adaptive process.

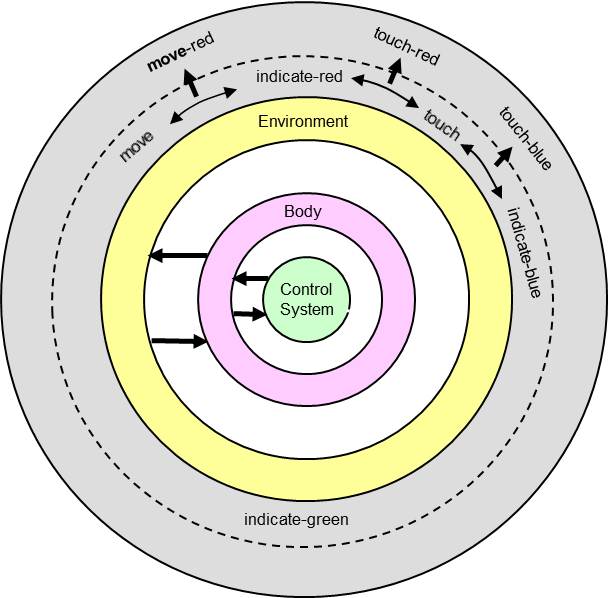

By analyzing the behavior of the agents in the WITH-INDICATE condition that generalize we observed that the robot that are able to comprehend and execute the new sentences are characterized by a multi-level and multi-scale behavior organization, i.e. they possess either lower-level elementary skills either higher-levels behaviors that are produced by combining in sequence and in parallel the lower-level actions (see Figure 1).

Figure 1. Schematization of the multi-level organization of behavioral skills displayed by the adapted robots able to generalize

Indeed these robots developed a set of elementary behaviors such us indicate-red, indicate-blue, and indicate-green (that consists in aligning the forearm toward the red, or blue or green object), touch (that consists in moving the hand once the forearm is aligned with the object until the tactile sensor detect the object that is executed in the same way independently by the object color) and move (that consists in moving the hand once the forearm is aligned with the object until the object is touched and then keep moving the hand so to move the object, independently of the object colour).

These elementary behavior are combined to display the other required behaviors. For example the TOUCH-RED behaviors is realized by combining a INDICATE-RED behavior that consists in aligning the arm with the red object that is executed during the entire trial with a TOUCH behavior, that consists in bending the hand independently from the arm position and the vision state, that is executed during the second part of the trial. The same TOUCH behavior is used to produce the TOUCH-BLUE action in combination with the INDICATE-BLUE behavior (see Figure 1). For a description of the analysis that indicate this multi-level organization see Tuci et al. (2011).

Overall this indicates that robots trained to produce related skills (as in the case of the experiment carried out in the with-INDICATE but not in the WITH-IGNORE condition) tend to synthesize structured solution that enable skill re-use. Moreover it shows how skills re-use enable compositionality and behavior generalization.

4. Extended experiments

We then scaled up the experimental scenario with respect to the complexity of the robots' sensory-motor system and the complexity of action set.

More specifically we run a new set of experiments in which robot's neural network controlled directly 2DOFs of the head, 2DOFs of the torso, the 7 DOFs of the arm and of the wrist, and virtual DOF that control the extension flexion of all fingers. In the new experiment the robot has been trained to reach, touch or grasp the spherical object. The sensory systems of the robots included visual information concerning the amount of color perceived on the left/right and upper/lower part of the visual field, propriosensors encoding the current position of the head and of the torso, a tactile sensor located on the palm, and the linguistic sensory units that are used to encode the imperative sentences (generated by combining the "reach", "touch", and "grasp" symbols with the "red", "green", and "blue" symbols). To enable the robot to pay attention to the target object and to ignore the other object we provided the robot's neural controller with three regulatory neurons that set the gain of the sensory neurons encoding red, green, and blue visual information (Ferrauto and Nolfi, in preparation).

Video 2. The behavior displayed by a robot at the end of the adaptive process during a series of trials in which the robot respond to the "Reach red", "Touch Yellow", and "Grasp Red" sentences (top, centre and bottom, respectively).

By training these neural controllers to an evolutionary process we manage to obtain, also in this case, robot able to comprehend the imperative sentences by producing the corresponding appropriate behavior. The robots neural controller were evolved in simulation and then successfully ported on the real robot (video 2).

5.Conclusions

The experiments illustrated cover an admittedly minimal language and action repertoire and neglect important aspects, such as the role of the sequential nature of language. Despite of that the model proposed seem to capture some of the fundamental aspects that are at the basis of language grounding, compositionality and behavior generalization.

Interestingly the obtained results indicates that compositionality might derive not only from the way in which concepts/meanings are represented but also from the way in which actions are generated.

Moreover, the obtained results suggests that the integration of the acquired language and action knowledge can enable forms of generalization at the level of behaviors that allows the robots to spontaneously produce new (never produced) behaviors in response to new (never experienced) sentences.

References

Cangelosi A., Metta G., Sagerer G., Nolfi S., Nehaniv C., Fischer K., Tani J., Belpaeme T., Sandini G., Nori F., Fadiga L., Wrede B., Rohlfing K., Tuci E., Dautenhahn K., Saunders J., and Zeschel A.. (2010). Integration of action and language knowledge: A roadmap for developmental robotics, IEEE Transactions on Autonomous Mental Development, vol. 2, no. 3, pp. 1-28.

Ferrauto T. and Nolfi S. (in preparation). Action integration and recombination in adaptive humanoid robots.

Pattacini U., Nori F., Natale L., Metta G., and Sandini G. (2010). An experimental evaluation of a novel minimum-jerk cartesian controller for humanoid robots. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems.

Sandini G., Metta G., and Vernon D. (2007). The icub cognitive humanoid robot: An open-system research platform for enactive cognition. In M. Lungarella, F. Iida, J. Bongard, and R. Pfeifer (Eds.) 50 Years of Artificial Intelligence, Springer Verlag, Berlin, Germany, pp.358-369

Tuci E., Ferrauto T., Zeschel A., Massera G., Nolfi S. (2011). An Experiment on Behaviour Generalisation and the Emergence of Linguistic Compositionality in Evolving Robots, IEEE Transactions on Autonomous Mental Development, (3)2: 176-189.