An iCub Evolving Reaching Skills

Gianluca Massera and Stefano Nolfi

1. Introduction

In this document we describe how a simulated iCub neuro-robot can acquire the capability to reach with its left arm any arbitrary target position in its peripersonal space by controlling 6 actuated joints (2 joints of the iCub's torso and 4 joints of the iCub's left arm). The connection weights of the robots' neural controller are adapted through an evolutionary method for the ability to minimize the average distance between the left hand of the robot and the target location averaged over several trials in which the robot has to reach different target positions. We also explain how this experiment can be replicated by using FARSA

Fig. 1. The robot and the environment. The white spots are the position on which the reaching capabilities are tested.

2. Method

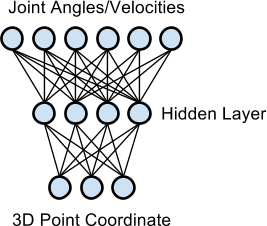

The robot's neural controller (Figure 2) is provided with three sensory neurons that encode the position of the target object in Cartesian coordinates, four internal neurons, and six motor neurons that control the desired angular position or velocities (depending on experimental setup, see below) of the six actuated DOFs (i.e. the rotation and the extension/flexion of the torso; the extension/flexion, the abduction/adduction and the supination/pronation of the arm; the extension/flexion of the forearm). The sensory neurons are fully connected to internal neurons that are fully connected to motor neurons.

Fig. 2. The architecture of robot's neural controller.

To verify the role of the sensory feedback during the robot/environment interactions we ran two sets of experiments:

- Steady-encoding experiments where:

- the sensory neurons encode the offset of the current target position along the X, Y, and Z axes, normalized in the range [0,1], with respect to centre of the iCub body, and

- the motor neurons encode the desired angles for the final posture of the arm. The offset between the desired and the target angular positions are then used to set the velocity of the joint motors on the basis of a simple proportional controller.

- Unsteady-encoding experiments where:

- the three sensory neurons encode the offset of the current target position along the X, Y, and Z axes, normalized in the range [0,1], with respect to the centre of the left palm, and

- the motor neurons encode directly the velocity of the joint motors.

In the latter case the robot can use the perceptual feedback of its own actions to refine its behaviour while it interact with the environment. In the former case, instead, the sensory state does not change while the robot move and consequently the robot cannot exploit the sensorial effects of its own actions. Moreover, the sensorial information perceived in the unsteady-encoding correlate directly to the extent to which the robot has successfully carried out its action.

During evolution the robots are evaluated for 400 trials on their ability to reach a target position. Each trial lasting up to 17.5 sec, during which the robot on evaluation should reach 400 corresponding target positions extracted from a set of 2304 reachable positions. The 2304 reachable points have been calculated by storing the position that the centre of the robot's left palm assumed when the six actuated joints were moved systematically within predefined values.

Furthermore, to favour the selection of robots that are able to generalize their abilities to any possible reachable position, the target position experienced during each trial was randomly chosen within a 2 cm diameter spherical area centred around the current extracted reachable position.

The fitness is inversely exponentially proportional to the distance between the robot's left palm position and the target position measured at the end of each trial.

To verify whether an incremental adaptive process can lead to better performances, we ran an additional set of experiments referred below as incremental.

More specifically, to simulate the condition on which the problem is initially simplified and become progressively harder as soon as the capacities of the robots improve, the robots are rewarded with the maximum fitness during trials in which the distance between robot's left palm position and the target position is below a threshold. This threshold is initially set to 5 cm at generation 0 and it is progressively reduced of 20% after a generation in which the average fitness of all individuals is greater than 0.6, and it is definitely set to 0.0 when it becomes lower than 1 cm.

2. Results

The combination of the steady versus unsteady encoding and incremental versus non-incremental adaptive process leads to 4 sets of experiments. For each experiments six replications starting from different randomly generated populations were ran.

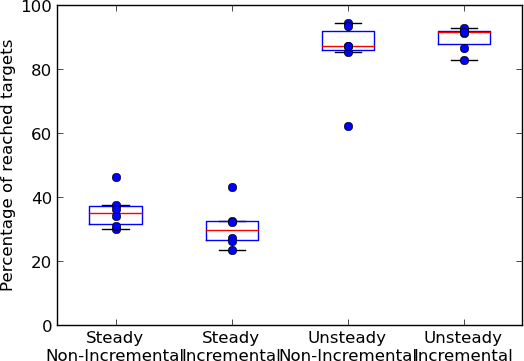

Evolved individuals were then post-evaluated on the entire set of 2304 reachable target locations by calculating percentage of target location reached with an accuracy of at least 5 cm.

By analysing the performance of the best evolved robots in the four experimental conditions (see Figure 3), we can see how the individuals evolved in the unsteady condition significantly outperform those evolved in the steady condition. This results confirms that the possibility to exploit the sensory feedbacks caused by the robot's actions and/or the availability of an information that strongly correlate with the extent to which the robot successfully accomplished the current action strongly facilitate the development of the effective solutions.

The comparison of the performance obtained in the incremental versus non-incremental experimental conditions does not reveal significant differences.

Overall the analysis of the results in the best experimental conditions indicates how the adapted robots can reach close to optimal performance. This is a remarkable results given the simplicity of the neural controller and given that some of the target located in peripheral areas of the robot's peripersonal space are hard to reach due to the limits and constraints that affect the robot's movements in these regions.

Fig. 3. Percentage of target locations reached with an accuracy of at least 5 cm (i.e. with a distance between the centre of the palm and the centre of the target location less or equal to 5 cm). The four box plot shows the distribution of performance of the 6 best robots of each of experimental condition.

3. Replicating the experiment with FARSA

This study is included between the exemplificative experiments of FARSA a free open software tool for Autonomous Robotics Simulation and Analysis.

To replicate and vary it you need to install the FARSA tool run the Total99 graphic interface, load the .ini project configuration file contained in the subdirectory of "ReachExperiment" corresponding on which experimental setup you want to load, and finally run the experiment by clicking on the "Create/configure experiment" icon.

If the program does not file the .dll plugin file of the experiment load it manually from the directory in which it is located with the file->load_plugin command or include the right directory in the Plugin Path graphic window, so that the program can find it automatically.

The example includes the results of all evolutionary processes mentioned above. So the first think you might want to do is to open the statistic viewer widget (from the View Menu) and then press the "Load All Stat" button to see how the fitness varied throughout generations in the replications of the experiments.

You can then choose an individual to test by opening the "Individual to test" widget, selecting a B0SX.gen file (where X is the seed of the replication) and then the best individual of a specific generation (from 0 to 3000) by clicking over the generation number. Then you can open the RenderWorld graphic widget and observe the behaviour of the selected robots with the Test->Selected individual command. You can also observe the architecture and the parameters of the network by opening the Evonet->Nervous_System graphic widget and the activation state of the neurons over time by opening the Evonet->Neurons_Monitor graphic widget.

You can observe the parameters in the "Parameters" panel of the Total99 graphic interface. In case you want to run a new evolutionary experiment you can change the seed parameter and any other paremeter you like after loading the .ini file but before configuring the experiment. Once the parameters are set you can then configure the experiment and run the evolutionary process through the graphic interface (with the command action->evolve) or better you can ran the evolutionary process in bath mode with the "total99 --batch --file=configuration.ini --action=evolve" command.

The source code of the experiment plugin is included in the directory of the experiment described above. You can browse and vary the source code too. For more detailed information on FARSA see the

References

Massera G., Ferrauto T., Gigliotta O., Nolfi S. (2013) Designing adaptive humanoid robots through the FARSA open-source framework. Submitted.