Evolution and self-organization in Swarm of Robots

Valerio Sperati, Vito Trianni, Stefano Nolfi

1. Objectives

The design of the control system for a swarm of robots is not a trivial enterprise. Above all, it is difficult to define which control rules can lead to a desired collective behaviour given that the global behaviour exhibited by the system is the emergent results of the interaction between the behaviours exhibited by each individual which in turn is the emergent result of the fine-grained interaction between the control system, the body, and the physical and social environment.

For this reason, evolutionary or learning processes have been widely used to automatically synthesise group behaviours.

In the experiments reported in this page we focus on the role the self-organization can play in a swarm of evolving individuals. More specifically, in the work described in the next section, we demonstrate how an evolutionary process driven by implicit and general purpose utility function can spontaneously lead to structured and coordinated behaviours.

2. Evolving coordinated group behaviours through maximization of mean mutual information

In the experiment reported in Sperati, Trianni & Nolfi (1998), we investigated the use of information-theoretic concepts such as entropy and mutual information as utility functions for mobile robots, which adapt on the basis of an evolutionary process. In particular, we investigated whether the use of a utility function that maximises the mutual information in state and time between the motor states of wheeled robots leads to the evolution of a variety of articulated and coordinated behaviours.

We rationale behind this experiment is the assumption that implicit and general purpose utility functions - fitness functions or reward/error measures - can allow evolution or learning to explore their search space more freely, without being constrained by an explicit description of the desired solution. In this way, it is possible to discover behavioural and cognitive skills that play useful functionalities, and that might be hard to identify beforehand by the experimenter without an a priori knowledge of the system under study.

Such task-independent utility functions can be conceived as universal intrinsic drives toward the development of useful behaviours in adaptive embodied agents. A second relevant aspect of this approach, which will be investigated in future work, concerns the combination of implicit and task-independent utility functions with explicit and task-dependent ones, in order to favour the development of behavioural and cognitive skills that serve specific requested functionalities, but that would be hard to develop through explicit descriptions only.

2.1 The experimental setup

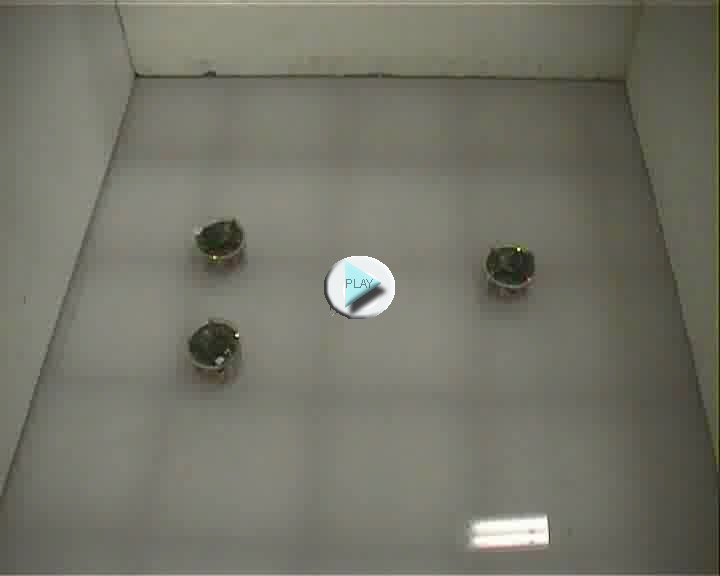

The experiment involves groups of 3 e-puck robots provided with a relatively reach sensors and motor systems that allow them to interact with their physical and social environment. More specifically, each robot is provided infrared proximity sensors, ambient light sensors, a VGA camera pointing in the direction of forward motion, two motors controlling the two corresponding wheels, 8 red LEDs distributed around their body that can be turned on or off, and a wireless bluetooth interface that could be used by the robot to send and receive signals (i.e. floating point values in the range [0.0, 1.0] to other robots.

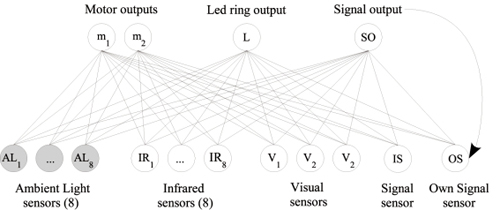

Figure 1. Left: A close up view of the environment with the light bulb in the centre and three robots. Right: The architecture of robots' neural controllers.

Each robot is controlled by a fully connected two layer neural network, provided with 20 sensory neurons that encode the state of the corresponding sensors (and of an efferent copy of the motor neuron controlling the produced signal), and three motor neurons that control the two motors and the signal produced.

The free parameters of the robot's neural controllers are adapted through an evolutionary process. The initial population consists of 100 randomly generated binary genotypes, which encode the connection weights, the bias terms and the time constants of 100 corresponding neural controllers. Each parameter is encoded by 8 bits, and its value is linearly scaled from the range [0, 255] to the range [-5.0, 5.0] in the case of connection weights and bias terms, and in the range [0.0, 0.95] in the case of time constants. The 20 best genotypes of each generation were allowed to reproduce by generating five copies each, with 4% of their bits replaced with a new randomly selected value, excluding one copy (elitism). The evolutionary process lasted 200 generations.

Each genotype is translated into 3 identical neural controllers which are downloaded onto three identical robots (i.e., the robots are homogeneous). Each team of 3 robots was tested for 10 trials, lasting 200 seconds (i.e., 2000 simulation cycles of 100 ms each).

Evolving individuals are selected on the basis of a fitness function which measures the Mutual Information MI between the motor states Xi of all possible robot pairs. The maximisation of MI should drive evolution towards the development of coordinated behaviours. In fact, high values of MI correspond to motor states that are positively correlated: the knowledge of motor state Xi gives information about motor state Xj and vice versa. In other words, Xi and Xj result from processes that we can describe as "coordinated". Moreover, since the maximisation of MI also requires the maximisation of the entropy of the motor state Xi of each robot, this fitness function rewards evolving robots for the ability to produce structured behaviours. In fact, entropy is maximised not only by very random behaviours, but also by very structured behaviours that systematically vary through time. In particular, periodic sequences of equally frequent elementary behaviours such as "move-forward", "move-backward", "turn-left" and "turn-right" allow the robot to uniformly cover many possible motor states, therefore maximising entropy.

2.2 Results

In this section, we describe the results obtained in the two experiments. In both experiments the evolved robots display behaviours that are structured (i.e., they consist of a sequence of atomic movements with varying time durations), periodic (i.e., the sequence of atomic movements is repeated through time), and coordinated (i.e., the different robots tend to produce the same structured behaviour in a synchronised manner).

In the first experiment, the robots are situated in a 1 m side square arena presenting a light bulb, which can be perceived by means of the robot's ambient light sensors.

The qualitative inspection of the obtained results indicates that the robots always display structured and coordinated behaviours (see videos below). Generally, the environmental cue offered by the light bulb is exploited by the robots to achieve the same relative position and to display a periodic, structured behaviour. Moreover, robots perform a coordinated behaviour through the synchronisation of their movements.

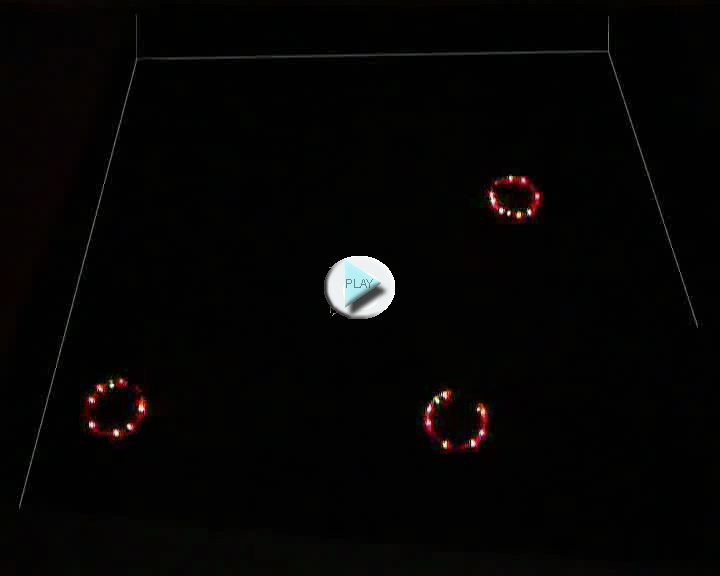

Evolutionary run 16: on left a test performed in simulation (single random trial corresponding about to 100 seconds). On right, a test performed with physical robots (5 random trials, each one lasting 200 seconds, videos speeded up to 4x). Note that the LEDs in real testing are switched on only to improve video quality.

Synchronisation is generally achieved through the exploitation of the communication signal only. Infrared sensors are generally exploited to avoid collisions with walls and with other robots, while visual information is often ignored. In the second set of experiments, the robots are situated in an arena without a light bulb. Moreover, robots are not provided with ambient light sensors.

The qualitative analysis revealed that 11 out of 20 evolutionary runs converge toward structured and coordinated behaviours (see examples below). In other two cases - namely runs 17 and 19 - the average performance is rather high but robots display behaviours that are structured and coordinated only initially, and later degenerate toward non-structured behaviours.

Evolutionary run 6: a test performed in simulation (single random trial corresponding about to 100 seconds). Run 6 is an example of the firts strategy (obtained 7 times on 11): robots exploit signal amplitude, visual information (LEDs are switched on and off), and sometimes infra red information. These replications are generally characterized by spatial pattern symmetry.

Evolutionary run 10: on left a test performed in simulation (single random trial corresponding about to 100 seconds). On right, a test performed with physical robots (1 random trial lasting 90 seconds, videos speeded up to 2x). Run 10 is an example of the second strategy (obtained 3 times on 11): robots exploit only signal amplitude (LEDs are always switched off). These replications are not characterized by spatial pattern symmetry.

Evolutionary run 8: on left a test performed in simulation (single random trial corresponding about to 100 seconds). On right, a test performed with physical robots (1 random trial lasting 7 minutes, videos speeded up to 5x).Run 8 is an example of the third strategy (obtained 1 time on 11): robots exploit signal amplitude and visual information (LEDs are always switched on), but the last one is used as a source of noise (without which the robots remains steady in the same motor state). This replication is not characterized by spatial pattern symmetry.

Also in this case, after an initial transitory phase, robots perform synchronised movements. Communication is exploited to achieve and maintain synchrony. The behaviours produced by the evolved controllers can be grouped into three strategies, described as follows.

An interesting example of the first strategy is given by run 6, which presents the highest average fitness within its group. This strategy is characterised by robots that periodically aggregate and disband, performing oscillatory movements around the centre of mass of the group and faraway from the walls. To do so, robots exploit vision, infrared proximity sensors and communication. Vision is mainly exploited in the aggregation phase, during which robots get close one to the other assuming a triangular formation. When robots are close enough to perceive each other through the infrared proximity sensors, they disband moving backward. Due to relative differences in robots positions and orientations with respect to the centre of mass of the group, the behaviour of the three robots is not well coordinated during the first oscillatory movements. However, the robots quickly converge toward a well coordinated behaviour. Notice also how the motor states vary through time, taking on many different values: this corresponds to a very structured behaviour, which is also well coordinated as the robots perform the same actions at the same time.

In the case of the second strategy (e.g. replication 10), the robots do not interact visually or through their proximity sensors. They mainly produce a behaviour structured in a sequence of atomic movements, such as backward motion on a large circle followed by forward motion on a small circle. These movements are performed without any reference to the position and orientation of the other robots or to the position and orientation of the robot in the arena, provided that robots are located far enough from walls. Robots exploit only the communication signal to coordinate, and the robots display synchronised movements without keeping any relation between their relative positions in the arena. As a consequence, coordinated movements are performed since the very beginning of the trial, because there is no need to achieve a particular spatial formation.

Finally, the third strategy includes only the controller evolved in run 8. This controller produces a peculiar behaviour characterised by four atomic movements that last from 10 to 40 seconds - i.e., a time span considerably longer than those observed in other evolutionary runs, - which are periodically repeated: (i) rotating several times to produce a nearly circular trajectory with a diameter of about 8 cm, (ii) rotating several times to produce a spiral trajectory with a diameter decreasing to 0 cm, (iii) rotating several times on the spot at full speed, (iv) rotating several times to produce a spiral trajectory with a diameter increasing from about 0 to about 8 cm. Also in this case, the movements of the robot are performed without any reference to the position and orientation of the other robots.

By testing how the evolved solution scale up to larger group of robots (formed by up to 48 individuals) we observed many of the solutions obtained in the first experiments and some of those obtained in the second experiment scale up rather well up.

2.3 Conclusions

We believe that the proposed methodology is particularly relevant for swarm robotics research, as it can efficiently synthesise self-organising, coordinated behaviours for a robotic swarm. In fact, there is a fundamental problem - referred to as the design problem - that arises in the development of self-organising behaviours for a group of robots. This problem consists in defining the appropriate individual rules that will lead to a certain global pattern, and it is particularly challenging due to the indirect relationship between control rules and individual behaviour, and between interacting individuals and the desired global pattern. In this respect, evolutionary robotics is particularly suited to synthesise self-organising behaviours. In fact, it bypasses the design problem as it relies on the automatic generation, test and selection of control solutions for the robotic system as a whole, without the need of an arbitrary decomposition of the given control problem into sub-problems (e.g., the desired global behaviour into individual behaviours and inter-individual interactions, as well as the individual behaviour in a set of control rules). The methodology we propose in this paper goes a step further in this direction: it promotes the evolution of coordinated behaviours without any constraint imposed by an explicit description of the desired solution. As a consequence, the proposed approach does not require a thorough knowledge of the system under study to devise the individual control rules, neither does it need a description of the desired solution to promote cooperative behaviours, as it can benefit of a task-independent, implicit utility function.

The proposed methodology represents a first step towards the evolution of self-organising behaviours for robotic swarms. In future work, we plan to exploit information theoretic measures in support of the evolution of task-oriented group behaviours. So far, we obtained synchrony without any constraint on the characteristics of the individual behaviour. We believe that a task-independent function can be successfully used in combination with a task-oriented one. The former should provide the drives to synthesise structured and coordinated behaviour. The latter should simply channel the evolutionary process towards individual and group behaviours that serve specific functionalities.

Another possible extension over the work presented in this paper concerns the use of heterogeneous robots. Using different controllers and/or different sensory-motor apparatuses, it should be possible to observe coordination among the robots that does not forcedly limit to synchronisation of the movements. Turn taking, entrainment and other forms of coordination become possible whenever the robots may have access to different sensory-motor experiences.

References

Sperati V., Trianni V., and Nolfi S. (2008). Evolving coordinated group behaviour thorugh maximization of mean mutual information. Swarm Intelligence Journal, Special Issue on Swarm Robotics, vol. 2, num 2-4, pp. 73-95. pdf